AI Risk Management for M&A: What Founders Need to Know

When acquiring an AI company, you're not just buying technology - you’re inheriting complex systems, regulatory risks, and talent dependencies. Unlike other tech deals, AI-focused M&A requires specialized due diligence to address unique challenges like unlicensed training data, exaggerated automation claims, and reliance on key employees. Failing to assess these risks early can lead to costly post-deal issues, such as legal penalties, loss of critical talent, or even the destruction of AI models.

Key Takeaways:

- Data Risks: Improperly sourced training data can lead to legal action and forced model deletion.

- Talent Retention: AI companies often depend on a small group of skilled engineers; losing them can undermine the acquisition.

- Verification: Claims about AI capabilities must be validated to avoid overpaying for underperforming assets.

- Governance: Strong post-deal governance ensures compliance and smooth integration of AI systems.

By conducting thorough technical due diligence and prioritizing early risk identification, you can protect your investment and maximize the value of the acquisition.

AI is Rewriting Due Diligence & How We Acquire Businesses How Buyers Win or Lose with Haytham Allos

sbb-itb-e766981

Primary AI Risks in M&A Transactions

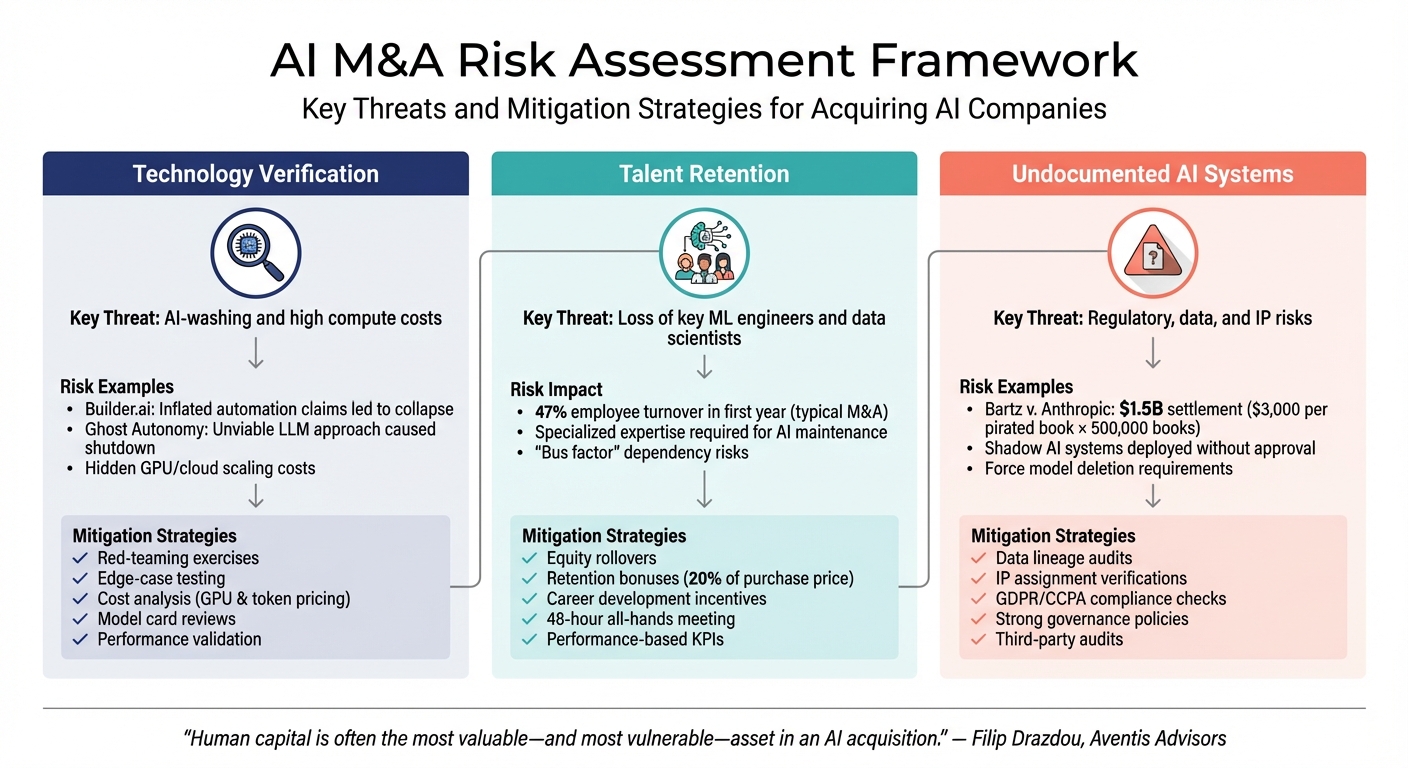

AI M&A Risk Assessment Framework: Key Threats and Mitigation Strategies

Acquiring an AI company comes with three major risks: verifying the technology, retaining key talent, and identifying undocumented AI systems. If overlooked, these risks can significantly reduce the value of your acquisition.

Technology Verification and Valuation Accuracy

One of the biggest challenges is identifying "AI-washing", where companies overstate their automation capabilities while relying heavily on manual processes. A prime example is Builder.ai, which faced collapse after inflated claims about its automation and subsequent leadership turmoil[1].

Another cautionary tale is Ghost Autonomy, a self-driving AI startup that shut down in early 2024. The company relied on Large Language Models (LLMs) for autonomous driving, but skepticism about the feasibility of its approach led to its downfall[1]. These examples highlight the critical need to verify that the AI technology you’re acquiring performs as claimed.

"The difference between perceived value and validated value can be substantial." - Skadden, Arps, Slate, Meagher & Flom LLP[4]

Beyond functionality, it’s essential to evaluate the operational costs of the AI system. High GPU and cloud costs required for scaling can make a seemingly promising technology commercially unviable[4].

While verifying the tech is key, you also need to focus on retaining the team behind it.

Talent Retention and Team Continuity

An AI company's value is often tied to its team, especially its machine learning (ML) engineers and data scientists. Unlike traditional software, where a larger pool of developers can handle maintenance, AI systems need highly specialized expertise for tuning and development. Losing these key personnel can make the acquired technology difficult - or even impossible - to maintain.

"Human capital is often the most valuable - and most vulnerable - asset in an AI acquisition." - Filip Drazdou, Director, Aventis Advisors[1]

To avoid losing critical talent, it's crucial to implement retention strategies. These might include equity rollovers, bonuses tied to vesting schedules, and career development plans. Such measures can help ensure team continuity during the transition.

But even with a strong team and verified technology, hidden risks can lurk in undocumented AI systems.

Undocumented AI Systems and Hidden Liabilities

Undocumented AI systems - those deployed by employees without formal approval - pose another significant risk. These "shadow" systems can bring regulatory, intellectual property (IP), and security challenges that may only surface after the acquisition is complete.

Take the Bartz v. Anthropic case from October 2025 as an example. The lawsuit involved allegations that Anthropic used pirated books for training its AI models, leading to a proposed class action settlement of up to $1.5 billion. This was calculated at $3,000 per book for 500,000 pirated works[5].

"AI innovation does not excuse weak provenance, privacy gaps, or thin governance." - Shumaker, Loop & Kendrick, LLP[5]

If an AI model is trained on illegally sourced data, regulators may require the deletion of the model and its derivatives, effectively erasing its value. Additionally, undocumented systems can lead to trade secret violations if proprietary data has been improperly used. To mitigate these risks, conduct thorough audits of data lineage, verify IP assignments, and enforce strict governance policies post-acquisition[5].

| Risk Category | Key Threat | Mitigation |

|---|---|---|

| Technology Verification | AI-washing and high compute costs | Red-teaming, edge-case testing, and cost analysis |

| Talent Retention | Loss of key ML engineers and data scientists | Equity rollovers, retention bonuses, and career development incentives |

| Undocumented AI Systems | Regulatory, data, and IP risks | Data audits, IP verifications, and strong governance policies |

How to Conduct Technical Due Diligence

Technical due diligence ensures that AI systems perform as claimed, going beyond surface-level assurances. Unlike traditional software testing, AI systems operate on probabilities, meaning their outputs can vary. This requires a validation process tailored to the unique characteristics of AI.

Evaluating AI Models and Algorithms

Start by requesting model cards and detailed performance reports that outline accuracy metrics using systematic frameworks. Additionally, assess how the system handles rate limits, quotas, and third-party service downtimes - especially if it relies on external large language model APIs.

"Financial diligence tells you what the company is worth today. Technical diligence, particularly AI diligence, tells you whether it will stay that way tomorrow." - Nita Nehru, Kickdrum [6]

Scalability and infrastructure costs are equally important. For self-hosted models, evaluate GPU compute costs, and for third-party services, analyze token-based pricing structures to gauge long-term financial viability. Another critical factor is the bus factor - a measure of how dependent the codebase is on a single developer. A codebase reliant on one individual poses significant risks, especially for team continuity.

Transparency and bias in algorithms are also key areas to examine. Verify that the algorithms have undergone rigorous testing for bias and that mechanisms exist to monitor and address errors or hallucinations. If the AI generates code, ensure there's a robust human review process in place. Machine-generated code might seem functional but could hide deeper structural flaws.

Once the models are validated, attention should shift to the integrity and ownership of the data.

Reviewing Data Quality and Ownership Rights

Data quality and ownership are foundational to technical due diligence. Trace the complete data lineage to ensure all training data was legally sourced. Using unauthorized data could lead to legal issues and costly remediation.

Confirm that all contributors - whether founders, employees, contractors, or advisors - have signed IP assignment and confidentiality agreements. This is crucial because copyright and patent laws in regions like the US, UK, and EU generally don’t protect works created solely by AI. Documenting human involvement is necessary to secure intellectual property rights.

Additionally, map out all categories of personal data used in training, fine-tuning, and deployment to ensure compliance with regulations like GDPR, CCPA, and specific laws such as the Biometric Information Privacy Act. Conduct adversarial testing to simulate attacks on AI models and test system integrity. Review third-party licenses for APIs, model weights, and open-source components to confirm these can be legally transferred during an acquisition.

Strong internal checks pave the way for independent third-party audits, which add another layer of validation.

Using Third-Party Audits for Validation

Independent audits are invaluable for tackling the "black box" nature of AI systems. They help validate claims about accuracy, scalability, and proprietary capabilities.

"When you buy a company with AI capabilities, you're not just buying software - you're inheriting data relationships, algorithmic decisions, regulatory compliance obligations, and risks that didn't exist five years ago." - Jason Lee [2]

Third-party auditors can distinguish between superficial AI claims and genuine, proprietary innovations. They evaluate how systems handle messy, real-world data and identify emerging security threats like prompt injection, data poisoning, and model theft - risks that traditional cybersecurity measures might miss. These auditors should also verify reproducibility by replicating performance benchmarks across different environments. Additionally, they assess cost dynamics, such as GPU infrastructure expenses and token-based pricing, to confirm that the AI system remains economically viable at scale.

A thorough audit ensures production reliability, maintainable workflows, reproducible results, and clear documentation - key factors for a trustworthy acquisition. Founders can seek guidance from seasoned advisors like Phoenix Strategy Group to navigate the complexities of AI technical due diligence.

Managing Organizational and Cultural Integration

Once due diligence is complete, the real challenge begins: aligning organizational cultures. This step is critical for safeguarding both technological assets and the people behind them. While technical validation is important, the human side of integration often determines whether an AI-focused merger or acquisition succeeds. In fact, cultural misalignment accounts for nearly 30% of failed mergers, and employee turnover can skyrocket to 47% within the first year of an acquisition [7]. For companies acquiring AI-native businesses, it’s crucial to address operational culture gaps and talent retention issues that could undermine the deal.

Bridging Organizational Culture Gaps

AI teams often operate differently from traditional organizations. For instance, a larger acquiring company may prioritize standardized processes and regulatory compliance, while the target AI startup may thrive on fast-paced experimentation and minimal documentation. To bridge these differences, standardizing AI workflows can help without forcing one side to completely abandon its approach. Shared prompt libraries, for example, can unify methodologies, reduce duplicate efforts, and encourage collaboration [3]. Tools like automated document review systems and AI-powered task managers also help establish a shared operational framework.

"Adaptation isn't surrender - platforms notice who's constructive vs. obstructive. The former get latitude; the latter get managed." - Taras [7]

Early alignment on AI governance is another key step. Clear protocols for handling data, using models, and defining decision-making responsibilities can ease the integration of an AI startup’s technical culture into the acquiring company’s structure [3]. While back-office processes should be standardized, maintaining flexibility in client-facing workflows can help preserve the startup’s agility [7]. Additionally, scheduling an all-hands meeting within 48 hours of closing the deal can set the tone, address concerns about job security and benefits, and clarify reporting structures [7].

Once operational processes are aligned, the focus shifts to keeping top talent engaged and motivated during the transition.

Keeping Key Talent After the Acquisition

Retaining critical AI talent goes beyond offering competitive salaries. Many acquirers link up to 20% of the purchase price to performance-based KPIs identified during the diligence phase [3]. These earn-outs align founders and key employees with the acquiring company’s goals while minimizing disputes post-acquisition.

Before designing retention packages, AI tools can be used to assess operational dependencies. These tools can identify concentration risks and pinpoint individuals who hold essential knowledge about the acquired company’s AI models [3]. While retention bonuses are common - used by about 60% of organizations during M&A transitions - they are most effective when paired with non-financial strategies [7]. For instance, middle managers, who often hold critical institutional knowledge, may feel a loss of autonomy during integration. Personalized discussions during the first week can help clarify their roles, set expectations, and reinforce their importance.

Another useful approach is creating an integration risk register based on insights from the diligence phase. This register can highlight potential areas of cultural or operational friction before the deal is finalized. Assigning clear roles for financial, legal, and operational reviews early on can help incoming teams feel valued and provide structure during the transition [3].

Phoenix Strategy Group specializes in guiding growth-stage companies through the complexities of AI-focused M&A. From technical due diligence to post-acquisition planning, they aim to protect both financial investments and the human capital that drives innovation.

Creating a Post-Acquisition Integration Plan

Once you've secured alignment on company culture and retained key talent, the next step is to integrate AI systems and operations smoothly. Without a clear plan, this process can stretch far beyond expectations. For instance, while pitch decks often promise a 12-month integration timeline, AI deployment in mergers and acquisitions (M&A) typically takes about 18 months [7]. A solid integration plan not only keeps the timeline in check but also ensures the acquisition retains its intended value. The process starts with setting up strong governance structures.

Establishing Governance Structures

To lay the groundwork for integration, create a cross-functional team that includes data scientists, compliance officers, risk experts, and ethics specialists. This team’s role is to build a framework for responsible AI use, covering areas like data governance, ethics, and accountability [9]. For example, standardize how data is sourced, licensed, and stored to meet privacy regulations such as GDPR and align with frameworks like the NIST AI Risk Management Framework [9].

"Understanding culture, and proactively managing it, is critical to a successful integration." - McKinsey [8]

Transparency is key. Require documentation such as model cards, performance reports, data usage policies, and audit logs to keep all processes clear and accountable [9]. Additionally, establish a shared vocabulary early on. Miscommunication often arises when teams use the same terms but mean different things, and this can derail progress [8].

Consolidating AI Systems and Workflows

After governance is in place, the focus shifts to merging AI tools and workflows from both companies. This process generally unfolds in four phases: Assessment (Months 1–3), Infrastructure (Months 2–5), Pilot Deployment (Months 4–8), and Scale (Months 8–18) [7].

During the Assessment phase, map out where client data resides, evaluate its format, and determine whether it’s stored in the cloud or on-premises [7]. Leverage Generative AI tools to streamline this process by analyzing vendor names, spend categories, and duplicate contracts [10]. For example, AI can reveal if both companies are paying for separate CRM or ERP systems and suggest which one to retain.

Before introducing new AI systems, migrate to standardized platforms and clean up historical data [7]. Go beyond official workflow documentation to identify repetitive, high-volume tasks that could benefit from AI automation [7]. Start small with pilot programs, rolling out new systems to select teams or processes for testing [7].

To maintain client loyalty during this period, prioritize the top 20% of clients with personal outreach and regular check-ins for at least a year. This proactive approach can help prevent client loss. Successful integrations often achieve client retention rates of 85–95%, while poorly executed ones see rates dip below 80% [7].

Tracking and Adjusting Integration Progress

Once systems and workflows are consolidated, continuous monitoring becomes crucial to ensure long-term success. Treat post-merger integration as an ongoing process rather than a one-time task, especially if you’re working within a "buy-and-build" strategy [10].

One common hurdle is the lack of clearly defined outcomes and measurable KPIs for tracking integration progress [10]. Start by standardizing data with AI tools to merge key datasets. This enables quick identification of redundancies and inefficiencies [10]. AI can also help pinpoint "tactical tail spend" - those small, irregular purchases that often bypass formal procurement processes and lead to waste [10].

"Adding well-taught and well-managed AI to your PMI tool kit is like provisioning aerial reconnaissance and precision weapons for those in the trenches: it can dramatically speed up their ability to zero in on the information they need." - AlixPartners [11]

If the deal involves earnouts or equity, it’s wise to tie performance tracking to specific, measurable results. Revenue-based metrics are often more straightforward than EBITDA-based ones, making them easier to define and monitor post-acquisition [10]. For companies that frequently acquire, AI systems can improve with every deal, enhancing both speed and accuracy in future integrations [10].

Conclusion: Preparing for Successful AI M&A

Mergers and acquisitions (M&A) in the AI space come with challenges that go beyond those of traditional deals. From undocumented systems and training data concerns to talent retention and intellectual property (IP) risks, these factors can complicate even the most promising transactions. The key to success lies in identifying these risks early. Waiting until after the deal closes to uncover compliance issues, biased algorithms, or hidden liabilities can jeopardize the entire acquisition.

To navigate these complexities, thorough technical due diligence is a must. This includes verifying AI models, auditing data ownership, and, when necessary, involving third-party experts. Retaining key talent is also critical - offering appropriate incentives and ensuring alignment within the organization can help preserve the team behind the AI, which is often the cornerstone of the deal's value.

Once due diligence is complete, establishing strong governance becomes the next priority. Cross-functional teams should focus on integrating AI systems, standardizing data practices, and ensuring compliance. To track progress effectively, implement clear KPIs and regularly monitor outcomes.

For founders of growth-stage companies, partnering with advisors who understand both AI and M&A can make a significant difference. Phoenix Strategy Group (https://phoenixstrategy.group) provides tailored M&A support, fractional CFO services, and data engineering expertise to help businesses scale, prepare for exits, and integrate successfully.

In this fast-changing AI M&A environment, taking a proactive and structured approach to risk management not only protects against costly surprises but also ensures that the full value of the deal is realized.

FAQs

What AI deal risks matter most before signing?

Before finalizing an AI-focused M&A deal, it's crucial to address several risks. Start by confirming that the AI models, data, and intellectual property involved are legally sound. This means checking for proper licensing, ensuring data has been sourced lawfully, and conducting thorough security tests to identify vulnerabilities.

It's also important to evaluate the AI models for potential biases, safety flaws, and any ethical issues that could arise. These factors can significantly impact the deal's success and the technology's usability.

Finally, make sure employment agreements, intellectual property ownership, and confidentiality rights are clearly outlined. This helps prevent disputes and ensures the transaction is both secure and compliant with legal standards.

How can I confirm the AI works at scale?

To gauge how well AI scales, focus on measurable outcomes in handling large data sets and its role in mergers and acquisitions (M&A). Early users have noted impressive results, such as automating due diligence processes to reduce review times by as much as 90%. Additionally, AI has been used to predict labor synergies with comparable accuracy to traditional methods. Research also indicates that companies leveraging AI more broadly in M&A activities tend to unlock greater value and operate more efficiently, showcasing its capability in large-scale scenarios.

What red flags indicate training data or IP problems?

Red flags related to training data or intellectual property (IP) issues often include the use of pirated datasets, retaining works that infringe on copyrights, or unresolved disputes over ownership and licensing. These problems can create serious legal liabilities and spark IP conflicts, which can become major risks during mergers and acquisitions (M&A) processes.