AI Governance for Financial Services: Best Practices

Artificial intelligence (AI) is transforming financial services, but its growing influence brings serious risks. Poor governance can lead to regulatory fines, reputational harm, and customer mistrust. This article explores how financial institutions can create effective AI governance frameworks to ensure compliance, ethical use, and operational transparency. Key points include:

- Regulatory Risks: Non-compliance with frameworks like the EU AI Act or U.S. regulations can result in fines up to 7% of global revenue.

- New U.S. Framework: The Financial Services AI Risk Management Framework (FS AI RMF), introduced on February 19, 2026, outlines 230 control objectives.

- Challenges for Companies: Growth-stage firms struggle with regulatory uncertainty, opaque AI systems, and managing data from over 1,000 sources.

- Best Practices: Include clear AI model inventories, risk-based governance, stakeholder accountability, and regular audits.

AI governance isn’t just about avoiding penalties - it builds trust and strengthens operations. Learn how to integrate these strategies into your financial services business.

AI Governance & Risk Management For Financial Services Industry

sbb-itb-e766981

Regulatory Frameworks for AI Compliance

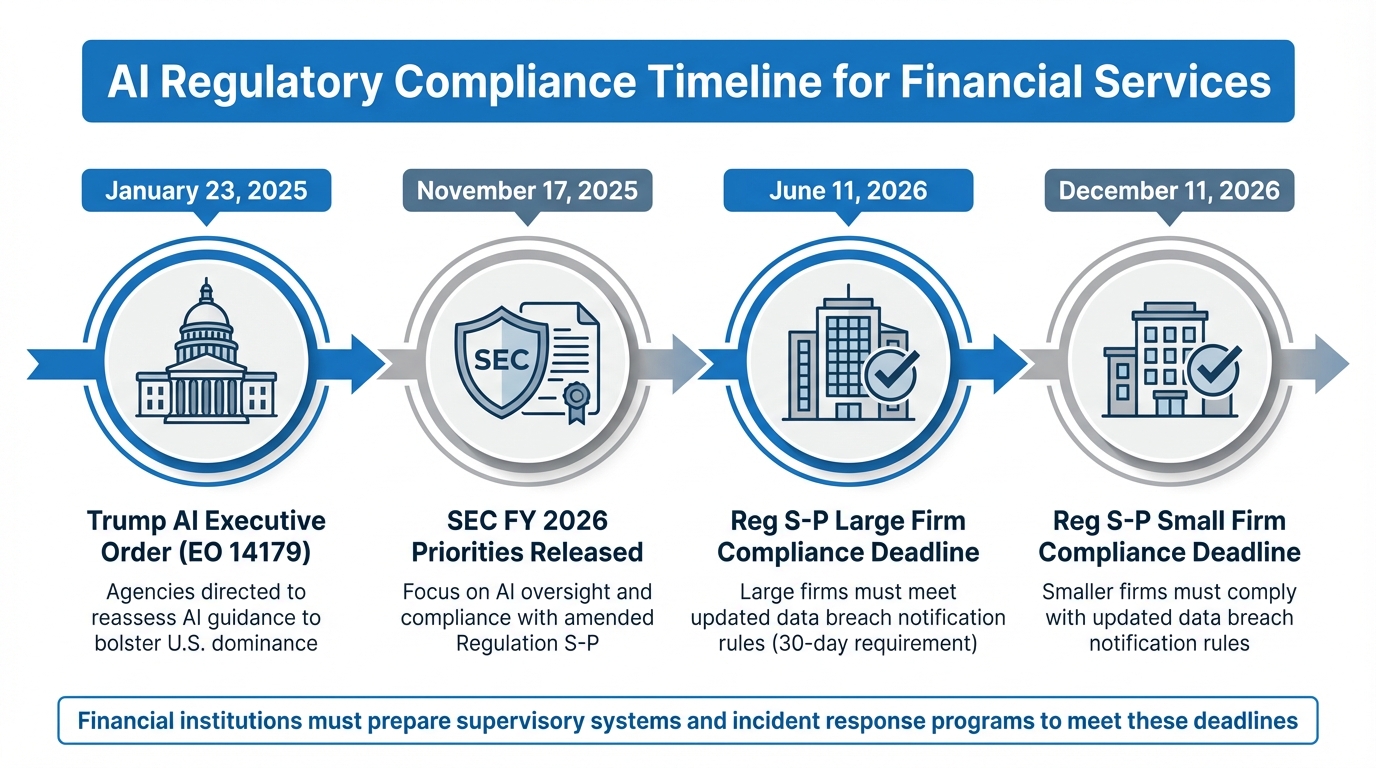

AI Regulatory Compliance Timeline for Financial Services 2025-2026

In financial services, regulatory frameworks are at the heart of ensuring AI compliance. Institutions using AI must navigate a web of rules, as both the SEC and FINRA maintain technologically neutral standards. This means that obligations like supervision, recordkeeping, and risk management remain the same, whether processes are manual or AI-driven [7][10].

This duality offers clarity but also complexity. Firms don’t need to wait for AI-specific regulations to understand their obligations, but advanced AI systems demand precise interpretation and stringent controls. A significant shift occurred in January 2025, when Executive Order 14179 replaced older AI mandates, urging agencies to develop plans to achieve "American global AI dominance" [7]. Below is a closer look at how these principles translate into actionable oversight.

SEC and FINRA Guidelines for AI in Finance

The SEC and FINRA have outlined their expectations through guidance and enforcement actions. For example, investment advisers, often supported by fractional CFO services, must ensure that AI-generated advice adheres to fiduciary duties, including the "Duty of Care" and "Duty of Loyalty." This requires avoiding conflicts of interest and being able to explain how algorithmic decisions are made - black-box systems are not acceptable [8].

Under FINRA Rule 3110, firms must establish supervisory systems with Written Supervisory Procedures (WSPs) that specifically address the risks of AI and GenAI. These risks include accuracy issues, bias, and cybersecurity vulnerabilities, all of which must be incorporated into updated WSPs [9][10]. The SEC has also prioritized AI oversight for FY 2026, with Acting Director Keith Cassidy emphasizing:

"a shift toward a more transparent and practical examination process, aiming to foster constructive dialogue with market participants rather than pursuing a 'gotcha' regulatory environment" [8].

In addition, the amended Regulation S-P, finalized in 2024, requires firms to implement written incident response programs for data breaches. Affected individuals must be notified within 30 days of determining a breach. Large firms have until June 11, 2026, to comply, while smaller firms have until December 11, 2026 [8].

Recent enforcement actions highlight the importance of compliance. In January 2025, Two Sigma Investments and Two Sigma Advisors paid $90 million in penalties for algorithmic vulnerabilities linked to unauthorized changes in trading parameters [11]. Similarly, FINRA fined Brex Treasury $900,000 in August 2024 for anti-money laundering failures caused by an automated identity verification algorithm, which allowed over $15 million in suspicious transactions [11]. Interactive Brokers also faced a $475,000 fine in late 2024 for segregation deficits of $30 million, stemming from a flawed algorithm in its securities lending program [11].

The SEC is also targeting "AI washing", where firms make exaggerated or misleading claims about their AI capabilities. Such practices can lead to enforcement actions and reputational harm. Firms must ensure their marketing materials accurately reflect their AI systems and capabilities [8][11].

New AI Regulatory Trends

Emerging trends are shaping how firms approach AI compliance. One notable development is the rise of autonomous "AI agents", systems that operate without predefined logic. FINRA has identified these as high-risk, requiring new supervisory protocols. Such systems can act beyond their intended scope, lack proper auditability, and even produce "hallucinations", where inaccurate information is presented as fact [9][10].

FINRA has been clear:

"FINRA's rules - which are intended to be technologically neutral - and the securities laws more generally, continue to apply when firms use GenAI or similar technologies" [10].

The organization also advises that firms using GenAI tools in their supervisory systems must evaluate the integrity, reliability, and accuracy of the AI models involved [10]. To mitigate risks like bias, hallucinations, and intellectual property concerns, firms are expected to document their training data sources [7][10]. Erik Gerding, Director of the SEC Division of Corporation Finance, further noted:

"As companies incorporate the use of artificial intelligence into their business operations, they are exposed to additional operational and regulatory risks" [7].

In another development, the SEC withdrew its July 2023 proposal on Predictive Data Analytics in June 2025. However, discussions from a March 2025 roundtable suggest that more practical rules may emerge in the future [11]. Meanwhile, the CFTC issued a staff advisory in December 2024, urging derivatives market participants to update their policies to address AI-specific risks in areas like risk management and recordkeeping [7].

| Milestone | Date | Regulatory Note |

|---|---|---|

| Trump AI Executive Order | Jan 23, 2025 | Agencies directed to reassess AI guidance to bolster U.S. dominance [7]. |

| SEC FY 2026 Priorities Released | Nov 17, 2025 | Focus on AI oversight and compliance with amended Reg S-P [8]. |

| Reg S-P Large Firm Compliance | June 11, 2026 | Deadline for large firms to meet updated data breach rules [8]. |

| Reg S-P Small Firm Compliance | Dec 11, 2026 | Deadline for smaller firms to comply with updated data breach rules [8]. |

Core Components of AI Governance Frameworks

Building effective AI governance means blending model risk management with operational risk management to tackle the challenges AI brings. IBM highlights this point:

"Agentic AI does not require a new, AI-specific governance regime, but rather a continuous and disciplined application of Model Risk Management" [14].

The first step is to establish clear AI classifications and maintain a complete inventory of models. Organizations need to define what qualifies as AI versus traditional rule-based systems and ensure all AI models and applications in production are tracked [17]. Without this foundation, governance efforts risk becoming disorganized. For example, the FINOS AI Governance Framework v2.0, released in November 2025, identifies 46 specific risks tied to AI in financial services and offers actionable controls that institutions can integrate into their operations [15].

Risk-Based Approach to AI Deployment

A risk-based approach tailors governance strategies to the specific threats posed by each AI application. Instead of applying one-size-fits-all policies, financial institutions should rely on heuristic risk identification frameworks to pinpoint the operational, security, or regulatory risks most relevant to each use case [13]. This process involves scenario analysis and stress testing to uncover potential failures before they happen.

For instance, the Monetary Authority of Singapore’s thematic review from December 2024 outlines best practices for managing AI model risks, stressing the need for rigorous validation and ongoing performance monitoring [16]. Techniques like version pinning and change notifications ensure untracked model updates don’t slip through [13]. Research on WikiChat shows that grounding large language models in reliable sources like Wikipedia can boost factual accuracy to 97.9%, a 55.0% improvement over baseline GPT-4 performance [13].

By using tools like scenario analysis, firms can more effectively define oversight roles and responsibilities.

Stakeholder Engagement and Accountability

For AI governance to succeed, clear ownership across departments is critical. The Three Lines of Defense (3LoD) model provides a structured way to embed AI oversight into existing risk management systems [14]. Key players - senior management, legal teams, developers, and compliance officers - must have clearly defined roles, ensuring every AI-driven decision involves either a human-in-the-loop or an accountable owner [17].

| Governance Component | Description | Key Stakeholders |

|---|---|---|

| Definitions | Clarify what qualifies as AI versus rule-based systems | Senior Management, Legal, Tech |

| Inventory | Maintain a comprehensive list of AI/ML models in production | Developers, Compliance |

| Policy/Standards | Establish rules for data ethics, fairness, and security | Risk Management, Legal |

| Controls Framework | Implement safeguards (preventive, detective, adaptive) | InfoSec, Engineering |

Collaboration between compliance, tech, legal, and risk teams aligns AI deployments with regulatory requirements [12]. The AIRS working group, which focuses on AI/ML risk in finance, has expanded to nearly 40 members from various institutions since its start in 2019, illustrating the value of shared learning in shaping governance practices [17].

This collaborative structure helps prepare organizations for broader integration.

Company-Wide Integration

Once accountability is established, integrating governance across the organization strengthens these controls. AI governance should seamlessly fit into existing risk management structures [17]. The FINOS framework promotes machine-readable controls through tools like CALM (Common Architecture Language Model), which allows companies to visualize and automate AI governance systems. Additionally, tier-based controls - preventive, detective, and adaptive mechanisms - are vital for managing AI autonomy [14][15].

Regular training for technical teams, risk managers, and leadership ensures everyone understands AI’s capabilities and limitations [15]. As the FINOS AI Governance Framework puts it:

"AI systems in financial services must comply with the same regulatory standards as human-driven processes, including those related to suitability, fairness, record-keeping, and marketing conduct" [12].

Risk Management and Ethics Best Practices

As financial institutions adopt AI technologies, they face a pressing need to address the ethical and operational risks these systems bring. The potential consequences are serious - biased AI models can lead to regulatory fines, harm to reputation, and negative impacts on customers. The FINOS AI Governance Framework highlights this concern:

"The implications of deploying biased AI systems are far-reaching for financial institutions, encompassing regulatory sanctions, reputational damage, and customer detriment" [18].

To navigate these challenges, institutions must focus on reducing bias, safeguarding data privacy, and implementing continuous oversight.

Bias Mitigation and Ethical AI Standards

AI systems can unintentionally reinforce discrimination if trained on flawed data or designed without proper safeguards. For instance, historical lending data often reflects past discriminatory practices, which can result in lower credit scores for minority applicants - even when their financial behaviors are comparable to others. Common problems include biased credit scoring, unfair loan approvals, discriminatory insurance pricing, and inconsistent customer service experiences.

In 2023, one financial institution tackled these issues by reducing biased credit scoring by 30% within six months. They achieved this through regular audits and incorporating human feedback loops [12]. To address bias effectively, institutions should:

- Rely on diverse and representative datasets during AI model training.

- Conduct regular audits to identify and address proxy variables linked to sensitive characteristics like race or gender.

- Use large language models to automate bias detection while ensuring human oversight for continuous improvement.

Beyond addressing bias, protecting sensitive information is equally critical.

Data Privacy and Security Controls

AI systems must comply with strict data privacy and security regulations. For example, the Federal Reserve's Supervisory Letter SR 11-07 underscores the importance of managing model risks within regulatory frameworks [6]. Similarly, the EU AI Act classifies certain financial AI applications as high-risk, demanding greater transparency and human oversight [12]. A robust data governance framework is essential, covering everything from data quality to classification and security throughout the AI lifecycle.

In March 2025, a financial institution rolled out automated data quality checks and classification protocols, which reduced compliance issues by 30% and improved the accuracy of AI-driven decisions [5]. To ensure data privacy and security, institutions should:

- Establish clear data handling policies.

- Use automated tools for data discovery and profiling.

- Conduct regular audits to identify and address compliance gaps.

- Provide employee training on data privacy protocols to foster a culture of accountability.

Real-Time Monitoring and AI Risk Scorecards

Continuous monitoring is key to detecting and addressing issues early. Effective monitoring systems focus on five critical areas: logging and audit trails, performance tracking, model behavior analysis, security event detection, and user interaction monitoring [20]. This includes keeping an eye on model outputs, confidence scores, prediction drift, concept drift, and data drift to ensure stability over time.

Institutions should collect observability data during normal operations to establish performance baselines. Automated alerts can then flag deviations or breaches of predefined thresholds [20]. Centralized log management via SIEM systems allows for efficient correlation and root cause analysis. For high-risk applications like credit scoring and fraud detection - identified under frameworks like the EU AI Act - monitoring must also ensure explainability data is available for model outputs [12][21]. By continuously tracking system performance and access logs, institutions can quickly detect and resolve issues [20].

Scalable AI Governance for Growth-Stage Companies

Growth-stage financial companies need AI governance frameworks that can keep pace with rapid growth. The key is a phased implementation approach: start with assessment and planning, move to designing the governance framework, follow with implementation (including training and technology integration), and finish with ongoing monitoring and auditing [3][6]. This step-by-step process avoids the pitfalls of retrofitting governance onto existing AI systems. Equally critical is forming a cross-functional governance committee. This group - comprising members from compliance, legal, data science, IT, risk, and business operations - ensures that accountability is shared across the organization [6].

Enterprise-Wide Data Strategy

A unified data strategy is the backbone of scalable AI governance. Managing data can be overwhelming - 41% of data leaders report challenges with handling over 1,000 data sources [2]. Automated governance tools can ease this burden. One key step is classifying data by sensitivity (Public, Internal, Confidential, Restricted) right at the point of ingestion. This ensures that downstream AI systems inherit the appropriate controls [19].

AI systems are only as good as the data they’re built on. To maintain quality, establish thresholds for metrics like accuracy, completeness, consistency, timeliness, relevance, and representativeness [19]. Documenting data origins, transformations, and usage is also essential for regulatory audits and ensuring model transparency [19][22]. As Anshuman Prasad, Global Head of Risk Analytics at Crisil, explains:

"Foundational governance pillars such as data quality, metadata management and taxonomy management will not only require more emphasis, but also need to adapt to support the underlying data that fuels these [AI] capabilities" [22].

Growth-stage companies should consider AI-powered data management platforms that automate governance tasks while adapting to shifting regulations [2]. Use technical safeguards to block highly confidential data from entering general-purpose AI development environments unless explicitly authorized [19]. Additionally, monitor data pipelines for changes in distribution or quality issues, as these can introduce bias or reduce model reliability [19]. With these measures in place, regular audits become a cornerstone for maintaining transparency.

Regular Audits and Transparency Practices

Regular audits play a critical role by providing "detective controls" through detailed logging and documentation. These are vital for investigating security incidents and justifying AI decisions to regulators [23]. As the number of AI models grows, manual oversight becomes impractical. Automated compliance checks and continuous monitoring are the only efficient ways to detect issues like model drift or bias [3].

A tiered implementation approach can help scale audits based on the risk level of specific AI applications [23]. For example, high-risk financial processes like credit scoring or fraud detection require comprehensive audit trails with cryptographic protection. On the other hand, moderate-stakes internal tools may only need basic flow reconstruction. This approach balances thoroughness with operational efficiency.

| Audit Tier | Recommended For | Key Control Focus |

|---|---|---|

| Tier 0 | Low-stakes applications | Minimal retention; human-in-the-loop oversight [23] |

| Tier 1 | Moderate-stakes; testing | Basic flow reconstruction; "what happened" vs "why" [23] |

| Tier 2 | Production; customer-facing | Explicit reasoning generation; natural language logic [23] |

| Tier 3 | High-risk transactions; autonomous | Comprehensive audit trail; cryptographic protection [23] |

Model documentation should be thorough enough for a third party to recreate the model without needing access to the original development code [24]. Independent validations, conducted at least annually, can check for "model drift" and ensure alignment with current business needs [24]. Andrew Mount, Counsel at Eversheds Sutherland, highlights the complexity of AI oversight:

"It's probably the most difficult question for last - books and records requirements. When is an AI-generated communication a record of the firm? There's not a good answer to this yet" [4].

Such rigorous audits strengthen AI systems, even as regulatory landscapes evolve.

Training Programs for Responsible AI Use

Strong governance also depends on well-informed employees. Training programs should focus on ethics, data privacy, and the limitations of approved AI tools [2][6]. This goes beyond compliance - it ensures that everyone understands their role in maintaining governance as the company grows.

Assigning a Chief AI Officer (CAIO) or a senior compliance leader to oversee AI strategy and risk management is a smart move [6]. Maintain a detailed inventory of all active and proposed AI use cases, documenting associated risks and controls [6][4]. For high-risk applications like credit scoring or sensitive customer interactions, human review should always be part of the workflow [6].

Partnering with experts like Phoenix Strategy Group can help growth-stage companies establish governance frameworks that integrate seamlessly with their financial operations. Their focus on real-time financial data synchronization and KPI development ensures that AI systems are monitored alongside traditional financial metrics, enabling governance to scale with the business.

Integrating AI Governance into Financial Operations

Incorporating AI governance into daily operations requires building on existing Model and Operational Risk Management frameworks [14]. This involves embedding governance practices within the Three Lines of Defense (3LoD) model, ensuring accountability across business units, risk management teams, and internal audit functions [14]. For growth-stage companies, it’s critical to integrate governance early - ideally during the design phase of AI initiatives - rather than trying to retrofit it later. These strategies apply across key financial operations, from fraud detection to wealth management, ensuring a consistent approach to governance throughout the system.

Fraud Detection and Credit Scoring

Using AI for fraud detection or credit scoring comes with high stakes and strict regulatory scrutiny. For example, the EU AI Act categorizes these applications as "high-risk", requiring stronger transparency measures and human oversight [12]. Before deploying new AI tools, it’s essential to compare their performance against legacy systems to confirm they deliver better accuracy [5][24].

When relying on third-party AI models, compliance is non-negotiable. Companies should secure detailed vendor documentation and conduct independent validations annually [5][24]. This ensures transparency around "black box" algorithms by clarifying their underlying principles, data sources, and variables [5][24]. Such diligence helps mitigate risks and meet regulatory expectations.

Risk Management in Wealth Management and Compliance

AI governance also plays a crucial role in wealth management, where balancing technical performance with explainability is key. Regulators and customers alike demand clear, understandable justifications for algorithmic decisions. The ACPR outlines four key criteria for evaluating AI in finance: data management, performance metrics, lifecycle stability, and explainability [21]. To address these, companies can implement tiered explanation levels - ranging from basic observations to detailed justifications and replications - tailored to the needs of compliance teams and customers [21].

Human-in-the-loop (HITL) oversight is another essential safeguard, particularly for high-stakes decisions. HITL allows operators to review and either confirm or reject algorithmic outputs, helping to counteract automation bias, which can lead to overreliance on AI recommendations [21][1].

Phoenix Strategy Group's Role in Supporting AI Governance

Phoenix Strategy Group provides tailored support to help growth-stage companies integrate AI governance into their financial operations. Their services include Fractional CFO support, FP&A systems, and Data Engineering, all designed to build strong data foundations with a focus on quality, metadata, and taxonomy [22]. They also synchronize real-time financial data with KPIs, enabling businesses to track both traditional metrics and AI performance.

Through their Integrated Financial Model and Monday Morning Metrics, Phoenix Strategy Group identifies early signs of model drift or compliance issues. For companies preparing for M&A or fundraising, they ensure that AI governance documentation meets the expectations of investors and auditors. This demonstrates that AI systems are operating within defined risk parameters, allowing governance practices to scale seamlessly as the business grows.

Conclusion

A solid AI governance framework does more than just tick compliance boxes - it enables financial institutions to innovate with confidence. When governance is executed effectively, it minimizes operational risks, avoids costly compliance breaches, and strengthens trust with both customers and investors. As noted by Tredence:

"Institutions that have strong governance are more confident in deploying advanced AI solutions because they know that the risks are under control. This creates a positive cycle where governance fuels innovation rather than slowing it down." – Tredence [1]

With the rise of autonomous AI systems, governance has become even more essential. The FINOS AI Governance Framework v2.0, now addressing 46 specific risks and their mitigations, highlights just how quickly the landscape is advancing [15]. This framework is designed to scale, making it indispensable as AI systems grow in complexity and autonomy. For growth-stage companies, the message is clear: embed governance early to avoid expensive retrofits later.

Start with the basics: assemble cross-functional committees with members from business, technology, legal, and compliance teams. Maintain a detailed inventory of AI models, complete with risk ratings, and use real-time monitoring tools to catch model drift early. These proactive steps not only protect your business but also ensure smoother adoption of advanced AI capabilities. Over time, these measures also make your organization more appealing to external evaluators like investors and auditors.

By adopting these governance practices, companies can go beyond compliance to strengthen their strategic position. For those navigating fundraising or mergers and acquisitions, well-documented AI governance demonstrates accountability and signals to stakeholders that risks are managed within clear boundaries. This can establish your organization as a reliable and forward-thinking partner.

AI governance is no longer a specialized concern - it’s now a cornerstone of risk management and trust-building in modern financial institutions. Companies that embrace governance as a strategic tool, rather than a regulatory burden, will be the ones best positioned to succeed as AI continues to transform the industry.

FAQs

What counts as “AI” for our governance inventory?

In the context of financial services, "AI" within the governance inventory refers to systems and models that leverage artificial intelligence or machine learning. These technologies are applied across various areas such as compliance, risk management, decision-making processes, and customer interactions.

However, it's not just about functionality - every AI system must adhere to regulatory standards and uphold ethical principles to ensure responsible and fair use.

How do we decide which AI use cases are “high-risk”?

Determining what qualifies as a "high-risk" AI use case calls for a detailed and methodical risk assessment. Several critical factors come into play: the influence on decision-making processes, adherence to regulations, security concerns, and the effect on stakeholder confidence. To navigate this, heuristic frameworks can be incredibly useful. These frameworks help evaluate the context of the use case, the type of data being processed, the AI model's technology, the potential consequences of its outputs, and the safeguards in place. By following such a structured approach, you can pinpoint risks early and take steps to address them effectively.

What evidence do regulators expect in an AI audit trail?

Regulators require an AI audit trail to provide comprehensive documentation of how decisions are made, including the factors influencing them, the reasoning involved, and the resulting outcomes. This documentation must include:

- Explainability mechanisms to clarify how and why decisions are reached.

- Real-time tracking to monitor AI behavior as it happens.

- Cross-session correlation to connect related actions or decisions over time.

- Tamper-evident logging to protect data integrity and support forensic investigations.

These elements are essential for meeting compliance standards and ensuring transparency in AI systems.